We also note that Google app engine used to do this but unfortunately it seems discontinued.

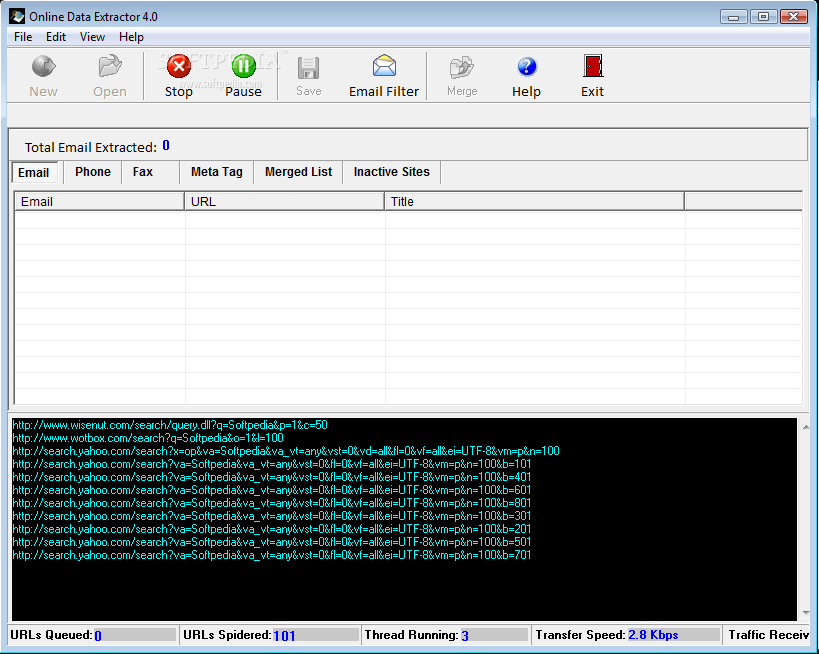

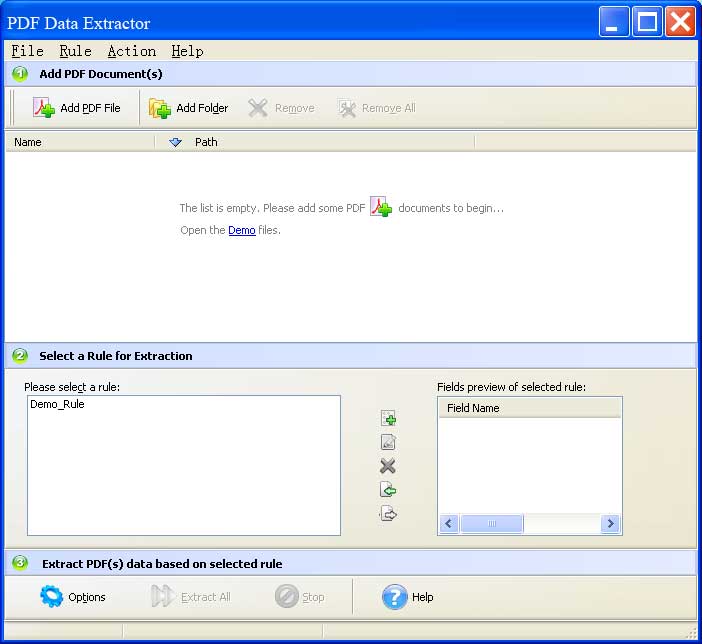

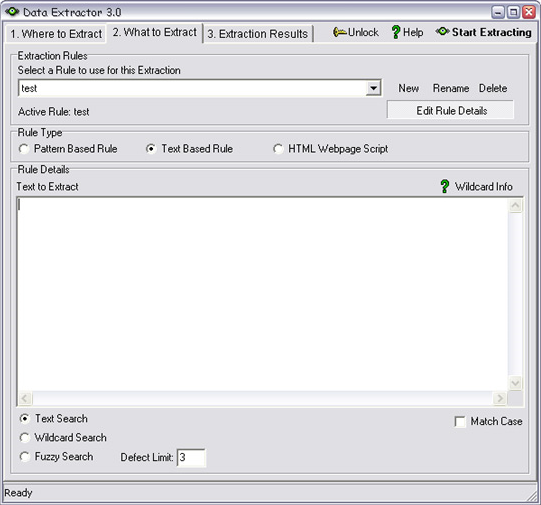

- pay-per-page service focused on tabular data extraction from the folks at ScraperWiki.- free, with an API, very bare bones site but quite good results based on our limiting testing.Two we have tried and seem promising are: There are many online – just do a search – so we do not propose a comprehensive list. Scraperwiki - and this tutorial - no longer working as of 2016Įxisting proprietary free or paid-for services.Note that as of 2016 this seems more focused on conversion to structured XML for scientific articles but may still be useful.Is this open? Says at bottom of usage that it is powered by.As with most free versions, there are limitations, typically time or features. Products featured on this list are the ones that offer a free trial version. - Give me Text is a free, easy to use open source web service that extracts text from PDFs and other documents using Apache Tika (and built by Labs member Matt Fullerton) Data Extraction Software Free Data Extraction Software Top Free Data Extraction Software Check out our list of free Data Extraction Software.Using scraperwiki + pdftoxml - see this recent tutorial Get Started With Scraping – Extracting Simple Tables from PDF Documents.With this tool, extract tables from PDF documents and images in real-time with 100 accuracy. AGPLv3+, python, scraptils has other useful tools as well, pdf2csv needs pdfminer=20110515 Extract table from pdf and images online Extract tables from PDF/Images Save your crucial time and prevent any error from occurring with Docsumo's free table extraction from a PDF/Image tool.pdftohtml - one of the better for tables but have not used for a while.Created by Scraperwiki but now closed-source and powering PDFTables so here is a fork. Tabula - open-source, designed specifically for tabular data.Apache PDFBox - Java library specifically for creating, manipulating and getting content from PDFs.Apache Tika - Java library for extracting metadata and content from all types of document types including PDF.Here’s a gist showing how to use pdf2json:.Max Ogden has this list of Node libraries and tools for working with PDFs:.pdf.js - you probably want a fork like pdf2json or node-pdfreader that integrates this better with node.Limited use for straightforward text extraction as it generates css-heavy HTML that replicates the exact look of a PDF document. Primarily focused on producing HTML that exactly resembles the original PDF. pdf2htmlEX - Convert PDF to HTML without losing text or format.

Started as an alternative to poppler’s pdftoxml, which didn’t properly decode CID Type2 fonts in PDFs. Docsplit is a command-line utility and Ruby library for splitting apart documents into their component parts: searchable UTF-8 plain text via OCR if necessary, page images or thumbnails in any format, PDFs, single pages, and document metadata (title, author, number of pages…) pdftoxml - command line utility to convert PDF to XML built on poppler.One of the better for tables but have found PDFMiner somewhat better for a while. pdftohtml - pdftohtml is a utility which converts PDF files into HTML and XML formats.In our trials PDFMiner has performed excellently and we rate as one of the best tools out there.It has an extensible PDF parser that can be used for other purposes than text analysis. It includes a PDF converter that can transform PDF files into other text formats (such as HTML). PDFMiner allows one to obtain the exact location of text in a page, as well as other information such as fonts or lines. Unlike other PDF-related tools, it focuses entirely on getting and analyzing text data. PDFMiner - PDFMiner is a tool for extracting information from PDF documents.Additionally, you can add human reviews with Amazon Augmented AI to provide oversight of your models and check sensitive data.A classic example of an important government report published as PDF only Generic (PDF to text) Textract can extract the data in minutes instead of hours or days. You can quickly automate document processing and act on the information extracted, whether you’re automating loans processing or extracting information from invoices and receipts. To overcome these manual and expensive processes, Textract uses ML to read and process any type of document, accurately extracting text, handwriting, tables, and other data with no manual effort. Today, many companies manually extract data from scanned documents such as PDFs, images, tables, and forms, or through simple OCR software that requires manual configuration (which often must be updated when the form changes). It goes beyond simple optical character recognition (OCR) to identify, understand, and extract data from forms and tables. Amazon Textract is a machine learning (ML) service that automatically extracts text, handwriting, and data from scanned documents.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed